Safety engineering

Safety engineering is an applied science closely related to systems engineering and its subset, System Safety Engineering. Safety engineering assures that a life-critical system behaves as needed even when other components fail. In practical terms, the term "safety engineering" refers to any act of accident prevention by a person qualified in the field. Safety engineering is often reactionary to adverse events, also described as "incidents," as reflected in accident statistics. This arises largely because of the complexity and difficulty of collecting and analyzing data on "near misses."

Increasingly, the importance of a safety review is being recognized as an important risk management tool. Failure to identify risks to safety, and the according inability to address or "control" these risks, can result in massive costs, both human and economic. The multidisciplinary nature of safety engineering means that a very broad array of professionals are actively involved in accident prevention or safety engineering.

The task of safety engineers

The majority of those practicing safety engineering are employed in industry to keep workers safe on a day to day basis.

Safety engineers distinguish different extents of defective operation. A failure is "the inability of a system or component to perform its required functions within specified performance requirements," while a fault is "a defect in a device or component, for example: A short circuit or a broken wire".[1] System-level failures are caused by lower-level faults, which are ultimately caused by basic component faults. (Some texts reverse or confuse these two terms.[2]) The unexpected failure of a device that was operating within its design limits is a primary failure, while the expected failure of a component stressed beyond its design limits is a secondary failure. A device which appears to malfunction because it has responded as designed to a bad input is suffering from a command fault.[2]

A critical fault endangers one or a few people. A catastrophic fault endangers, harms, or kills a significant number of people.

Safety engineers also identify different modes of safe operation: A probabilistically safe system has no single point of failure, and enough redundant sensors, computers, and effectors so that it is very unlikely to cause harm (usually "very unlikely" means, on average, less than one human life lost in a billion hours of operation). An inherently safe system is a clever mechanical arrangement that cannot be made to cause harm—obviously the best arrangement, but this is not always possible. A fail-safe system is one that cannot cause harm when it fails. A fault-tolerant system can continue to operate with faults, though its operation may be degraded in some fashion.

These terms combine to describe the safety needed by systems: For example, most biomedical equipment is only "critical," and often another identical piece of equipment is nearby, so it can be merely "probabilistically fail-safe." Train signals can cause "catastrophic" accidents (imagine chemical releases from tank-cars) and are usually "inherently safe." Aircraft "failures" are "catastrophic" (at least for their passengers and crew) so aircraft are usually "probabilistically fault-tolerant." Without any safety features, nuclear reactors might have "catastrophic failures," so real nuclear reactors are required to be at least "probabilistically fail-safe," and some, such as pebble bed reactors, are "inherently fault-tolerant."

The process

Ideally, safety engineers take an early design of a system, analyze it to find what faults can occur, and then propose safety requirements in design specifications up front and changes to existing systems to make the system safer. In an early design stage, often a fail-safe system can be made acceptably safe with a few sensors and some software to read them. Probabilistic fault-tolerant systems can often be made by using more, but smaller and less-expensive pieces of equipment.

Far too often, rather than actually influencing the design, safety engineers are assigned to prove that an existing, completed design is safe. If a safety engineer then discovers significant safety problems late in the design process, correcting them can be very expensive. This type of error has the potential to waste large sums of money.

The exception to this conventional approach is the way some large government agencies approach safety engineering from a more proactive and proven process perspective. This is known as System Safety. The System Safety philosophy, supported by the System Safety Society and many other organizations, is to be applied to complex and critical systems, such as commercial airliners, military aircraft, munitions and complex weapon systems, spacecraft and space systems, rail and transportation systems, air traffic control system and more complex and safety-critical industrial systems. The proven System Safety methods and techniques are to prevent, eliminate and control hazards and risks through designed influences by a collaboration of key engineering disciplines and product teams. Software safety is fast growing field since modern systems functionality are increasingly being put under control of software. The whole concept of system safety and software safety, as a subset of systems engineering, is to influence safety-critical systems designs by conducting several types of hazard analyses to identify risks and to specify design safety features and procedures to strategically mitigate risk to acceptable levels before the system is certified.

Additionally, failure mitigation can go beyond design recommendations, particularly in the area of maintenance. There is an entire realm of safety and reliability engineering known as "Reliability Centered Maintenance" (RCM), which is a discipline that is a direct result of analyzing potential failures within a system and determining maintenance actions that can mitigate the risk of failure. This methodology is used extensively on aircraft and involves understanding the failure modes of the serviceable replaceable assemblies in addition to the means to detect or predict an impending failure. Every automobile owner is familiar with this concept when they take in their car to have the oil changed or brakes checked. Even filling up one's car with gas is a simple example of a failure mode (failure due to fuel starvation), a means of detection (fuel gauge), and a maintenance action (filling the tank).

For large scale complex systems, hundreds if not thousands of maintenance actions can result from the failure analysis. These maintenance actions are based on conditions (for example, gauge reading or leaky valve), hard conditions (for example, a component is known to fail after 100 hrs of operation with 95 percent certainty), or require inspection to determine the maintenance action (such as metal fatigue). The Reliability Centered Maintenance concept then analyzes each individual maintenance item for its risk contribution to safety, mission, operational readiness, or cost to repair if a failure does occur. Then the sum total of all the maintenance actions are bundled into maintenance intervals so that maintenance is not occurring around the clock, but rather, at regular intervals. This bundling process introduces further complexity, as it might stretch some maintenance cycles, thereby increasing risk, but reduce others, thereby potentially reducing risk, with the end result being a comprehensive maintenance schedule, purpose built to reduce operational risk and ensure acceptable levels of operational readiness and availability.

Analysis techniques

The two most common fault modeling techniques are called "failure modes and effects analysis" and "fault tree analysis." These techniques are just ways of finding problems and of making plans to cope with failures, as in Probabilistic Risk Assessment (PRA or PSA). One of the earliest complete studies using PRA techniques on a commercial nuclear plant was the Reactor Safety Study (RSS), edited by Prof. Norman Rasmussen[3]

Failure modes and effects analysis

In the technique known as "failure mode and effects analysis" (FMEA), an engineer starts with a block diagram of a system. The safety engineer then considers what happens if each block of the diagram fails. The engineer then draws up a table in which failures are paired with their effects and an evaluation of the effects. The design of the system is then corrected, and the table adjusted until the system is not known to have unacceptable problems. It is very helpful to have several engineers review the failure modes and effects analysis.

Fault tree analysis

First a little history to put FTA into perspective. It came out of work on the Minuteman Missile System. All the digital circuits used in the Minuteman Missile System were designed and tested extensively. The failure probabilities as well as failure modes well understood and documented for each circuit. GTE/Sylvania, one of the prime contractors, discovered that the probability of failure for various components were easily constructed from the Boolean expressions for those components. (Note there was one complex digital system constructed by GTE/Sylvania about that time with no logic diagrams only pages of Boolean expressions. These worked out nicely because logic diagrams are designed to be read left to right the way the engineer creates the design. But when they fail the technicians must read them from right to left.) In any case this analysis of hardware lead to the use of the same symbology and thinking for what (with additional symbols) is now known as a Fault Tree. Note the de Morgan's equivalent of a fault tree is the success tree.

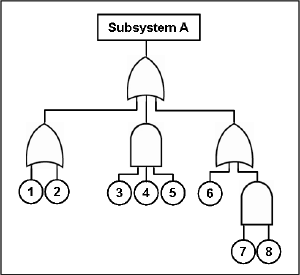

In the technique known as "fault tree analysis," an undesired effect is taken as the root ('top event') of a tree of logic. There should be only one Top Event and all concerns must tree down from it. This is also a consequence of another Minuteman Missile System requirement that all analysis be Top Down. By fiat there was to be no bottom up analysis. Then, each situation that could cause that effect is added to the tree as a series of logic expressions. When fault trees are labeled with actual numbers about failure probabilities, which are often in practice unavailable because of the expense of testing, computer programs can calculate failure probabilities from fault trees.

The Tree is usually written out using conventional logic gate symbols. The route through a Tree between an event and an initiator in the tree is called a Cutset. The shortest credible way through the tree from Fault to initiating Event is called a Minimal Cutset.

Some industries use both Fault Trees and Event Trees (see Probabilistic Risk Assessment). An Event Tree starts from an undesired initiator (loss of critical supply, component failure etc) and follows possible further system events through to a series of final consequences. As each new event is considered, a new node on the tree is added with a split of probabilities of taking either branch. The probabilities of a range of "top events" arising from the initial event can then be seen.

Classic programs include the Electric Power Research Institute's (EPRI) CAFTA software, which is used by almost all the U.S. nuclear power plants and by a majority of U.S. and international aerospace manufacturers, and the Idaho National Laboratory's SAPHIRE, which is used by the U.S. Government to evaluate the safety and reliability of nuclear reactors, the Space Shuttle, and the International Space Station.

Safety certification

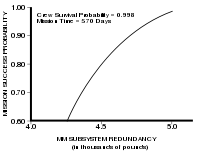

Usually a failure in safety-certified systems is acceptable if, on average, less than one life per 109 hours of continuous operation is lost to failure. Most Western nuclear reactors, medical equipment, and commercial aircraft are certified to this level. The cost versus loss of lives has been considered appropriate at this level (by FAA for aircraft under Federal Aviation Regulations).

Preventing failure

Probabilistic fault tolerance: Adding redundancy to equipment and systems

Once a failure mode is identified, it can usually be prevented entirely by adding extra equipment to the system. For example, nuclear reactors contain dangerous radiation, and nuclear reactions can cause so much heat that no substance might contain them. Therefore reactors have emergency core cooling systems to keep the temperature down, shielding to contain the radiation, and engineered barriers (usually several, nested, surmounted by a containment building) to prevent accidental leakage.

Most biological organisms have a certain amount of redundancy: Multiple organs, multiple limbs, and so on.

For any given failure, a fail-over, or redundancy can almost always be designed and incorporated into a system.

When does safety stop, where does reliability begin?

Assume there is a new design for a submarine. In the first case, as the prototype of the submarine is being moved to the testing tank, the main hatch falls off. This would be easily defined as an unreliable hatch. Now the submarine is submerged to 10,000 feet, whereupon the hatch falls off again, and all on board are killed. The failure is the same in both cases, but in the second case it becomes a safety issue. Most people tend to judge risk on the basis of the likelihood of occurrence. Other people judge risk on the basis of their magnitude of regret, and are likely unwilling to accept risk no matter how unlikely the event. The former make good reliability engineers, the latter make good safety engineers.

Perhaps there is a need to design a Humvee with a rocket launcher attached. The reliability engineer could make a good case for installing launch switches all over the vehicle, making it very likely someone can reach one and launch the rocket. The safety engineer could make an equally compelling case for putting only two switches at opposite ends of the vehicle which must both be thrown to launch the rocket, thus ensuring the likelihood of an inadvertent launch was small. An additional irony is that it is unlikely that the two engineers can reconcile their differences, in which case a manager who does not understand the technology could choose one design over the other based on other criteria, like cost of manufacturing.

Inherent fail-safe design

When adding equipment is impractical (usually because of expense), then the least expensive form of design is often "inherently fail-safe." The typical approach is to arrange the system so that ordinary single failures cause the mechanism to shut down in a safe way. (For nuclear power plants, this is termed a passively safe design, although more than ordinary failures are covered.)

One of the most common fail-safe systems is the overflow tube in baths and kitchen sinks. If the valve sticks open, rather than causing an overflow and damage, the tank spills into an overflow.

Another common example is that in an elevator the cable supporting the car keeps spring-loaded brakes open. If the cable breaks, the brakes grab rails, and the elevator cabin does not fall.

Inherent fail-safes are common in medical equipment, traffic and railway signals, communications equipment, and safety equipment.

Containing failure

It is also common practice to plan for the failure of safety systems through containment and isolation methods. The use of isolating valves, also known as the Block and bleed manifold, is very common in isolating pumps, tanks, and control valves that may fail or need routine maintenance. In addition, nearly all tanks containing oil or other hazardous chemicals are required to have containment barriers set up around them to contain 100 percent of the volume of the tank in the event of a catastrophic tank failure. Similarly, long pipelines have remote-closing valves periodically installed in the line so that in the event of failure, the entire pipeline is not lost. The goal of all such containment systems is to provide means of limiting the damage done by a failure to a small localized area.

See also

Notes

- ↑ Jane Radatz, IEEE Standard Glossary of Software Engineering Terminology (New York, NY: The Institute of Electrical and Electronics Engineers, 1990, ISBN 155937067X).

- ↑ 2.0 2.1 W.E. Vesely, F.F. Goldberg, N.H. Roberts, and D.F. Haasl, Fault Tree Handbook (Washington, DC: U.S. Nuclear Regulatory Commission, 1981, page V-1). NUREG-0492.

- ↑ Norman C. Rasmussen, et al., Reactor Safety Study (Washington, DC: U.S. Nuclear Regulator Commission, Appendix VI "Calculation of Reactor Accident Consequences." WASH-1400 NUREG-75-014).

ReferencesISBN links support NWE through referral fees

- Dhillon, B.S. 2003. Engineering Safety: Fundamentals, Techniques, and Applications. London, UK: World Scientific. ISBN 981238328X.

- Lutz, Robyn R. 2000. "Software Engineering for Safety: A Roadmap" in Finkelstein, Anthony (ed.). 2000. The Future of Software Engineering. New York, NY: Association for Computing Machinery. ISBN 1581132530. Retrieved October 20, 2008.

- Marshall, Gilbert. 2000. Safety Engineering. Des Plaines, IL: American Society of Safety Engineers. ISBN 1885581289.

- Spellman, Frank R. 2004. Safety Engineering: Principles and Practices. Lanham, MD: Government Institutes. ISBN 0865879702.

- US DOD. 2000. Standard Practice for System Safety. Washington, DC: U.S. Department of Defense, 31 pages. MIL-STD-822D.

- US FAA. 2000. System Safety Handbook. Washington, DC: U.S. Federal Aviation Administration (FAA).

External links

All links retrieved December 22, 2022.

- Hardware Fault Tolerance – A discussion about redundancy schemes.

- American Society of Safety Professionals

- Board of Certified Safety Professionals

- System Safety Society

- The Safety and Reliability Society

| Types | Major fields of technology | Edit |

|---|---|---|

| Applied Science | Energy storage | Artificial intelligence | Ceramic engineering | Computing technology | Electronics | Energy | Engineering physics | Materials science | Materials engineering | Microtechnology | Nanotechnology | Nuclear technology | Optical engineering | |

| Athletics and Recreation | Camping equipment | Playground | Sports | Sports equipment | |

| The Arts and Language | Communication | Graphics | Music technology | Speech recognition | Visual technology | |

| Business and Information | Construction | Financial engineering | Information technology | Management information systems | Manufacturing | Machinery | Mining | Telecommunication | |

| Military | Bombs | Guns and Ammunition | Military technology and equipment | Naval engineering | |

| Domestic / Residential | Domestic appliances | Domestic technology | Educational technology | Food products and production | |

| Engineering | Agricultural engineering | Bioengineering | Biochemical engineering | Biomedical engineering | Chemical engineering | Civil engineering | Computer engineering | Electrical engineering | Environmental engineering | Industrial engineering | Mechanical engineering | Metallurgical engineering | Nuclear engineering | Petroleum engineering | Software engineering | Structural engineering | |

| Health and Safety | Biomedical engineering | Bioinformatics | Biotechnology | Cheminformatics | Fire protection technology | Health technologies | Pharmaceuticals | Safety engineering | |

| Travel and Trade | Aerospace | Aerospace engineering | Marine engineering | Motor vehicles | Space technology | Transport | |

Credits

New World Encyclopedia writers and editors rewrote and completed the Wikipedia article in accordance with New World Encyclopedia standards. This article abides by terms of the Creative Commons CC-by-sa 3.0 License (CC-by-sa), which may be used and disseminated with proper attribution. Credit is due under the terms of this license that can reference both the New World Encyclopedia contributors and the selfless volunteer contributors of the Wikimedia Foundation. To cite this article click here for a list of acceptable citing formats.The history of earlier contributions by wikipedians is accessible to researchers here:

The history of this article since it was imported to New World Encyclopedia:

Note: Some restrictions may apply to use of individual images which are separately licensed.