Perception

Perception is the process by which stimulation of the senses is translated into meaningful experience. The understanding of this process has long been the topic of debate by philosophers, who largely question the source and validity of human knowledge—the study now known as epistemology—and in more recent times by psychologists.

In contemporary psychology, perception is defined as the brain’s interpretation of sensory information so as to give it meaning. Cognitive sciences make the understanding of perception more detailed: Perception is the process of acquiring, interpreting, selecting, and organizing sensory information. Many cognitive psychologists hold that, as we move about in the world, we create a model of how the world works. That means, we sense the objective world, but our sensations map to percepts, and these percepts are provisional, in the same sense that the scientific methods and scientific hypotheses can be provisional.

Organization of perception

The word perception comes from the Latin perception-, percepio, meaning "receiving, collecting, action of taking possession, apprehension with the mind or senses."[1]

Our perception of the external world begins with the senses, which lead us to generate empirical concepts representing the world around us, within a mental framework relating new concepts to preexisting ones. Perception takes place in the brain. Using sensory information as raw material, the brain creates perceptual experiences that go beyond what is sensed directly. Familiar objects tend to be seen as having a constant shape, even though the retinal images they cast change as they are viewed from different angles. This is because our perceptions have the quality of constancy, which refers to the tendency to sense and perceive objects as relatively stable and unchanging despite changing sensory stimulation and information.

Once we have formed a stable perception of an object, we can recognized it from almost any position, at almost any distance, and under almost any illumination. A white house looks like a white house by day or by night and from any angle. We see it as the same house. The sensory information may change as illumination and perspective change, but the object is perceived as constant.

The perception of an object as the same regardless of the distance from which it is viewed is called size constancy. Shape constancy is the tendency to see an object as the same no matter what angle it is viewed from. Color constancy is the inclination to perceive familiar objects as retaining their color despite changes in sensory information. Likewise, when saying brightness constancy, we understand the perception of brightness as the same, even though the amount of light reaching the retina changes. Size, shape, brightness, and color constancies help us better to understand and relate to world. Psychophysical evidence denotes that without this ability, we would find the world very confusing.

Perception is categorized as internal and external: "Internal perception" ("interoception") tells us what is going on in our bodies. We can sense where our limbs are, whether we are sitting or standing; we can also sense whether we are hungry, or tired, and so forth. "External perception" or "sensory perception," ("exteroception"), tells us about the world outside our bodies. Using our senses of sight, hearing, touch, smell, and taste, we discover colors, sounds, textures, and so forth of the world at large.

The study of perception

Methods of studying perception range from essentially biological or physiological approaches, through psychological approaches, to the philosophy of mind, empiricist epistemology such as that of David Hume, John Locke, and George Berkeley, or Merleau Ponty's affirmation of perception as the basis of all science and knowledge.

The philosophy of perception concerns how mental processes and symbols depend on the world internal and external to the perceiver. The philosophy of perception is very closely related to a branch of philosophy known as epistemology—the theory of knowledge.

While René Descartes concluded that the question "Do I exist?" can only be answered in the affirmative (cogito ergo sum), Freudian psychology suggests that self-perception is an illusion of the ego, and cannot be trusted to decide what is in fact real. Such questions are continuously reanimated, as each generation grapples with the nature of existence from within the human condition. The questions remain: Do our perceptions allow us to experience the world as it "really is?" Can we ever know another point of view in the way we know our own?

There are two basic understandings of perception: Passive Perception (PP) and Active Perception (PA). The passive perception (conceived by René Descartes) could be summarized as the following sequence of events: surrounding - > input (senses) - > processing (brain) - > output (re-action). Although still supported by mainstream philosophers, psychologists, and neurologists, this theory is losing momentum. The theory of active perception has emerged from extensive research of sensory illusions in the work of psychologists such as Richard L Gregory. This theory is increasingly gaining experimental support and could be surmised as a dynamic relationship between “description” (in the brain) < - > senses < - > surrounding.

The most common theory of perception is naïve realism in which people believe what they perceive to be things in themselves. Children develop this theory as a working hypothesis of how to deal with the world. Many people who have not studied biology carry this theory into adult life and regard their perception to be the world itself rather than a pattern that overlays the form of the world. Thomas Reid took this theory a step further. He realized that sensation was composed of a set of data transfers but declared that these were in some way transparent so that there is a direct connection between perception and the world. This idea is called direct realism and has become popular in recent years with the rise of postmodernism and behaviorism. Direct realism does not clearly specify the nature of the bit of the world that is an object in perception, especially in cases where the object is something like a silhouette.

The succession of data transfers that are involved in perception suggests that somewhere in the brain there is a final set of activity, called sense data, that is the substrate of the percept. Perception would then be some form of brain activity and somehow the brain would be able to perceive itself. This concept is known as indirect realism. In indirect realism it is held that we can only be aware of external objects by being aware of representations of objects. This idea was held by John Locke and Immanuel Kant. The common argument against indirect realism, used by Gilbert Ryle amongst others, is that it implies a homunculus or Ryle's regress where it appears as if the mind is seeing the mind in an endless loop. This argument assumes that perception is entirely due to data transfer and classical information processing. This assumption is highly contentious and the argument can be avoided by proposing that the percept is a phenomenon that does not depend wholly upon the transfer and rearrangement of data.

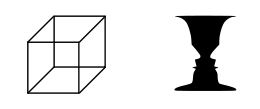

Direct realism and indirect realism are known as "realist theories of perception" because they hold that there is a world external to the mind. Direct realism holds that the representation of an object is located next to, or is even part of, the actual physical object whereas indirect realism holds that the representation of an object is brain activity. Direct realism proposes some as yet unknown direct connection between external representations and the mind whilst indirect realism requires some feature of modern physics to create a phenomenon that avoids infinite regress. Indirect realism is consistent with experiences such as: dreams, imaginings, hallucinations, illusions, the resolution of binocular rivalry, the resolution of multistable perception, the modeling of motion that allows us to watch television, the sensations that result from direct brain stimulation, the update of the mental image by saccades of the eyes, and the referral of events backwards in time.

There are also "anti-realist" understanding of perception: Idealism and Skepticism. Idealism holds that we create our reality whereas skepticism holds that reality is always beyond us. One of the most influential proponents of idealism was George Berkeley who maintained that everything was mind or dependent upon mind. Berkeley's idealism has two main strands, phenomenalism in which physical events are viewed as a special kind of mental event and subjective idealism. David Hume is probably the most influential proponent of skepticism.

Perception is one of the oldest fields within scientific psychology, and there are correspondingly many theories about its underlying processes. The oldest quantitative law in psychology is the Weber-Fechner law, which quantifies the relationship between the intensity of physical stimuli and their perceptual effects. It was the study of perception that gave rise to the Gestalt school of psychology, with its emphasis on holistic approach.

Scientific accounts of perception

The science of perception is concerned with how events are observed and interpreted. An event may be the occurrence of an object at some distance from an observer. According to the scientific account this object will reflect light from the sun in all directions. Some of this reflected light from a particular, unique point on the object will fall all over the corneas of the eyes and the combined cornea/lens system of the eyes will divert the light to two points, one on each retina. The pattern of points of light on each retina forms an image. This process also occurs in the case of silhouettes where the pattern of absence of points of light forms an image. The overall effect is to encode position data on a stream of photons and to transfer this encoding onto a pattern on the retinas. The patterns on the retinas are the only optical images found in perception, prior to the retinas, light is arranged as a fog of photons going in all directions.

The images on the two retinas are slightly different and the disparity between the electrical outputs from these is resolved either at the level of the lateral geniculate nucleus or in a part of the visual cortex called 'V1'. The resolved data is further processed in the visual cortex where some areas have relatively more specialized functions, for instance area V5 is involved in the modeling of motion and V4 in adding color. The resulting single image that subjects report as their experience is called a 'percept'. Studies involving rapidly changing scenes show that the percept derives from numerous processes that each involve time delays.[2]

fMRI studies show that dreams, imaginings, and perceptions of similar things such as faces are accompanied by activity in many of the same areas of brain. Thus, it seems that imagery that originates from the senses and internally generated imagery may have a shared ontology at higher levels of cortical processing.

If an object is also a source of sound this is transmitted as pressure waves that are sensed by the cochlea in the ear. If the observer is blindfolded it is difficult to locate the exact source of sound waves, if the blindfold is removed the sound can usually be located at the source. The data from the eyes and the ears is combined to form a 'bound' percept. The problem of how the bound percept is produced is known as the binding problem and is the subject of considerable study. The binding problem is also a question of how different aspects of a single sense (say, color and contour in vision) are bound to the same object when they are processed by spatially different areas of the brain.

Visual perception

In psychology, visual perception is the ability to interpret visible light information reaching the eyes which is then made available for planning and action. The resulting perception is also known as eyesight, sight or vision. The various components involved in vision are known as the visual system.

The visual system allows us to assimilate information from the environment to help guide our actions. The act of seeing starts when the lens of the eye focus an image of the outside world onto a light-sensitive membrane in the back of the eye, called the retina. The retina is actually part of the brain that is isolated to serve as a transducer for the conversion of patterns of light into neuronal signals. The lens of the eye focuses light on the photoreceptive cells of the retina, which detect the photons of light and respond by producing neural impulses. These signals are processed in a hierarchical fashion by different parts of the brain, from the retina to the lateral geniculate nucleus, to the primary and secondary visual cortex of the brain.

The major problem in visual perception is that what people see is not simply a translation of retinal stimuli (i.e., the image on the retina). Thus, people interested in perception have long struggled to explain what visual processing does to create what we actually see.

Ibn al-Haytham (Alhacen), the "father of optics," pioneered the scientific study of the psychology of visual perception in his influential Book of Optics in the 1000s, being the first scientist to argue that vision occurs in the brain, rather than the eyes. He pointed out that personal experience has an affect on what people see and how they see, and that vision and perception are subjective. He explained possible errors in vision in detail, and as an example, describes how a small child with less experience may have more difficulty interpreting what he/she sees. He also gives an example of an adult that can make mistakes in vision because of how one's experience suggests that he/she is seeing one thing, when he/she is really seeing something else.[3]

Ibn al-Haytham's investigations and experiments on visual perception also included sensation, variations in sensitivity, sensation of touch, perception of colors, perception of darkness, the psychological explanation of the moon illusion, and binocular vision.[4]

Hermann von Helmholtz is often credited with the first study of visual perception in modern times. Helmholtz held vision to be a form of unconscious inference: vision is a matter of deriving a probable interpretation for incomplete data.

Inference requires prior assumptions about the world: two well-known assumptions that we make in processing visual information are that light comes from above, and that objects are viewed from above and not below. The study of visual illusions (cases when the inference process goes wrong) has yielded much insight into what sort of assumptions the visual system makes.

The unconscious inference hypothesis has recently been revived in so-called Bayesian studies of visual perception. Proponents of this approach consider that the visual system performs some form of Bayesian inference to derive a perception from sensory data. Models based on this idea have been used to describe various visual subsystems, such as the perception of motion or the perception of depth.[5][6]

Gestalt psychologists working primarily in the 1930s and 1940s raised many of the research questions that are studied by vision scientists today. The Gestalt Laws of Organization have guided the study of how people perceive visual components as organized patterns or wholes, instead of many different parts. Gestalt is a German word that translates to "configuration or pattern." According to this theory, there are six main factors that determine how we group things according to visual perception: Proximity, Similarity, Closure, Symmetry, Common fate and Continuity.

The major problem with the Gestalt laws (and the Gestalt school generally) is that they are descriptive not explanatory. For example, one cannot explain how humans see continuous contours by simply stating that the brain "prefers good continuity." Computational models of vision have had more success in explaining visual phenomena[7] and have largely superseded Gestalt theory.

Color perception

Color vision is the capacity of an organism or machine to distinguish objects based on the wavelengths (or frequencies) of the light they reflect or emit. The nervous system derives color by comparing the responses to light from the several types of cone photoreceptors in the eye. These cone photoreceptors are sensitive to different portions of the visible spectrum. For humans, the visible spectrum ranges approximately from 380 to 750 nm, and there are normally three types of cones. The visible range and number of cone types differ between species.

In the human eye, the cones are maximally receptive to short, medium, and long wavelengths of light and are therefore usually called S-, M-, and L-cones. L-cones are often referred to as the red receptor, but while the perception of red depends on this receptor, microspectrophotometry has shown that its peak sensitivity is in the greenish-yellow region of the spectrum. In most primates closely related to humans there are three types of color receptors (known as cone cells). This confers trichromatic color vision, so these primates, like humans, are known as trichromats. Many other primates and other mammals are dichromats, and many mammals have little or no color vision.

The peak response of human color receptors varies, even amongst individuals with 'normal' color vision;[8] In non-human species this polymorphic variation is even greater, and it may well be adaptive.[9]

Chromatic adaptation

An object may be viewed under various conditions. For example, it may be illuminated by the sunlight, the light of a fire, or a harsh electric light. In all of these situations, human vision perceives that the object has the same color: an apple always appears red, whether viewed at night or during the day. On the other hand, a camera with no adjustment for light may register the apple as having many different shades. This feature of the visual system is called chromatic adaptation, or color constancy; when the correction occurs in a camera it is referred to as white balance.

Chromatic adaptation is one aspect of vision that may fool someone into observing an color-based optical illusion. Though the human visual system generally does maintain constant perceived color under different lighting, there are situations where the brightness of a stimulus will appear reversed relative to its "background" when viewed at night. For example, the bright yellow petals of flowers will appear dark compared to the green leaves in very dim light. The opposite is true during the day. This is known as the Purkinje effect, and arises because in very low light, human vision is approximately monochromatic and limited to the region near a wavelength of 550nm (green).

Perception of distance and depth

We are constantly judging the distance between ourselves and other objects and we use many cues to determine the distance and the depth of objects. Some of these cues depend on visual messages that one eye alone can transmit: these are called "monocular cues." Others known as "binocular cues" require the use of both eyes. Having two eyes allows us to make more accurate judgments about distance and depth, particularly, when the objects are relatively close.

Depth perception is the visual ability to perceive the world in three dimensions. It is a trait common to many higher animals. Depth perception allows the beholder to accurately gauge the distance to an object.

Depth perception combines several types of depth cues grouped into two main categories: monocular cues (cues available from the input of just one eye) and binocular cues (cues that require input from both eyes).

Monocular cues

- Motion parallax - When an observer moves, the apparent relative motion of several stationary objects against a background gives hints about their relative distance. This effect can be seen clearly when driving in a car nearby things pass quickly, while far off objects appear stationary. Some animals that lack binocular vision due to wide placement of the eyes employ parallax more explicitly than humans for depth cueing (some types of birds, which bob their heads to achieve motion parallax, and squirrels, which move in lines orthogonal to an object of interest to do the same).

- Depth from motion - A form of depth from motion, kinetic depth perception, is determined by dynamically changing object size. As objects in motion become smaller, they appear to recede into the distance or move farther away; objects in motion that appear to be getting larger seem to be coming closer.

- Color vision - Correct interpretation of color, and especially lighting cues, allows the beholder to determine the shape of objects, and thus their arrangement in space. The color of distant objects is also shifted towards the blue end of the spectrum. (e.g. distant mountains.) Painters, notably Cezanne, employ "warm" pigments (red, yellow and orange) to bring features forward towards the viewer, and "cool" ones (blue, violet, and blue-green) to indicate the part of a form that curves away from the picture plane.

- Perspective - The property of parallel lines converging at infinity allows us to reconstruct the relative distance of two parts of an object, or of landscape features.

- Relative size - An automobile that is close to us looks larger than one that is far away; our visual system exploits the relative size of similar (or familiar) objects to judge distance.

- Distance fog - Due to light scattering by the atmosphere, objects that are a great distance away look hazier. In painting, this is called "atmospheric perspective." The foreground is sharply defined; the background is relatively blurred.

- Depth from Focus - The lens of the eye can change its shape to bring objects at different distances into focus. Knowing at what distance the lens is focused when viewing an object means knowing the approximate distance to that object.

- Occlusion - blocking the sight of objects by others is also a clue which provides information about relative distance. However, this information only allows the observer to create a "ranking" of relative nearness.

- Peripheral vision - At the outer extremes of the visual field, parallel lines become curved, as in a photo taken through a fish-eye lens. This effect, although it's usually eliminated from both art and photos by the cropping or framing of a picture, greatly enhances the viewer's sense of being positioned within a real, three-dimensional space.

- Texture gradient - Suppose you are standing on a gravel road. The gravel near you can be clearly seen in terms of shape, size, and color. As your vision shifts towards the distant road the texture cannot be clearly differentiated.

Binocular and occulomotor cues

- Stereopsis/Retinal disparity - Animals that have their eyes placed frontally can also use information derived from the different projection of objects onto each retina to judge depth. By using two images of the same scene obtained from slightly different angles, it is possible to triangulate the distance to an object with a high degree of accuracy. If an object is far away, the disparity of that image falling on both retinas will be small. If the object is close or near, the disparity will be large. It is stereopsis that tricks people into thinking they perceive depth when viewing Magic Eyes, Autostereograms, 3D movies and stereoscopic photos.

- Accommodation - When we try to focus on far away objects, the ciliary muscles stretches the eye lens, making it thinner. The kinesthetic sensations of the contracting and relaxing ciliary muscles (intraocular muscles) are sent to the visual cortex where it is used for interpreting distance/depth.

- Convergence - By virtue of stereopsis the two eyeballs focus on the same object. In doing so they converge. The convergence will stretch the extraocular muscles. Kinesthetic sensations from these extraocular muscles also help in depth/distance perception. The angle of convergence is larger when the eye is fixating on far away objects.

Depth perception in art

A woodcut showing a mechanical method for reproducing a correct perspective drawing. The strings placed from the viewer's eye to the lute correspond to the rays of light traveling from the same points.

As art students learn, there are several ways in which perspective can help in estimating distance and depth. In linear perspective, two parallel lines that extend into the distance seem to come together at some point in the horizon. In aerial perspective, distant objects have a hazy appearance and a somewhat blurred outline. The elevation of an object also serves as a perspective cue to depth.

Trained artists are keenly aware of the various methods for indicating spacial depth (color shading, distance fog, perspective, and relative size), and take advantage of them to make their works appear "real." The viewer feels it would be possible to reach in and grab the nose of a Rembrandt portrait or an apple in a Cezanne still life—or step inside a landscape and walk around among its trees and rocks.

Photographs capturing perspective are two-dimensional images that often illustrate the illusion of depth. Stereoscopes and Viewmasters, as well as Three-dimensional movies, employ binocular vision by forcing the viewer to see two images created from slightly different positions (points of view). By contrast, a telephoto lens—used in televised sports, for example, to zero in on members of a stadium audience—has the opposite effect. The viewer sees the size and detail of the scene as if it were close enough to touch, but the camera's perspective is still derived from its actual position a hundred meters away, so background faces and objects appear about the same size as those in the foreground.

At the end World War II, Merleau-Ponty published Phenomenology of Perception, in which he:

All my knowledge of the world.... is gained from my own particular point of view, or from some experience of the world without which the symbols of science would be meaningless ... I am the absolute source, my existence does not stem from my antecedents, from my physical and social environment; instead it moves out towards them and sustains them, for I alone bring into being for myself.....the horizon whose distance from me would be abolished ....if I were not there to scan it with my gaze.

Many artists applied such theories to their own aesthetics and started to focus on the importance of the person's point of view (and its mutability) for a wider understanding of reality. They began to conceive the audience, and the exhibition’s space, as fundamental parts of the artwork, creating a kind of mutual communication between them and the viewer, who ceased, therefore, to be a mere addressee of their message.

Perception of movement

The perception of movement is a complicated process involving both visual information from the retina and messages from the muscles around the eyes as they follow an object. The perception of movement depends in part on movement of image across the retina of the eye. If you stand still and move your head to look around you, the images of all the objects in the room will pass across your retina. Yet, you will perceive all the objects as stationary. Even if you hold your head still and move only your eyes, the images will continue to pass across your retina. But the messages from the eye muscles seem counteract those from the retina, so the objects in the room will be perceived as motionless.

Motion perception is the process of inferring the speed and direction of objects and surfaces that move in a visual scene given some visual input. Although this process appears straightforward to most observers, it has proven to be a difficult problem from a computational perspective, and extraordinarily difficult to explain in terms of neural processing. Motion perception is studied by many disciplines, including psychology, neuroscience, neurophysiology, and computer science.

Visual Illusions

At times our perceptual processes trick us into believing that an object is moving when, in fact, it is not. There is a difference, then, between real movement and apparent movement. Examples of apparent movement are autokinetic illusion, stroboscopic motion, and beta movement. Autokinetic illusion is the sort of perception when a stationary object is actually moving. Apparent movement that results from flashing a series of still pictures in rapid succession, as in a motion picture, is called stroboscopic motion. Phi phenomenon is the apparent movement caused by flashing lights in sequence, as on theater marquees.

Visual illusions occur when we use a variety of sensory cues to create perceptual experiences that not actually exist. Some are physical illusions, such as the bent appearance of a stick in water. Others are perceptual illusions, which occur because a stimulus contains misleading cues that lead to an inaccurate perception. Most of stage magic is based on the principles of physical and perceptual illusion.

Amodal perception

Amodal perception is the term used to describe the full perception of a physical structure when it is only partially perceived. For example, a table will be perceived as a complete volumetric structure even if only part of it is visible; the internal volumes and hidden rear surfaces are perceived despite the fact that only the near surfaces are exposed to view, and the world around us is perceived as a surrounding void, even though only part of it is in view at any time.

Formulation of the theory is credited to the Belgian psychologist Albert Michotte and Italian psychologist Fabio Metelli, with their work developed in recent years by E. S. Reed and Gestaltists.

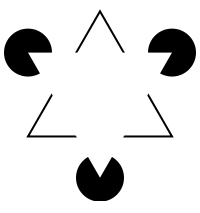

Modal completion is a similar phenomena in which a shape is perceived to be occluding other shapes even when the shape itself is not drawn. Examples include the triangle that appears to be occluding three disks in the Kanizsa triangle and the circles and squares that appear in different versions of the Koffka cross.

Haptic perception

The haptic perceptual system is unusual in that it can include the sensory receptors from the whole body. It is closely linked to the movement of the body so can have a direct effect on the world being perceived. Gibson (1966) defined the haptic system as "The sensibility of the individual to the world adjacent to his body by use of his body."[10]

The concept of haptic perception is closely allied to the concept of "active touch"—that realizes that more information is gathered when a motor plan (movement) is associated with the sensory system, and that of "extended physiological proprioception"—a realization that when using a tool such as a stick the perception is transparently transferred to the end of the tool.

Interestingly, the capabilities of the haptic sense, and of somatic sense in general have been traditionally underrated. In contrast to common expectation, loss of the sense of touch is a catastrophic deficit. It makes it almost impossible to walk or perform other skilled actions such as holding objects or using tools.[11] This highlights the critical and subtle capabilities of touch and somatic senses in general. It also highlights the potential of haptic technology.

Speech perception

Speech perception refers to the processes by which humans are able to interpret and understand the sounds used in language. The process of perceiving speech begins at the level of the sound signal and the process of audition. After processing the initial auditory signal, speech sounds are further processed to extract acoustic cues and phonetic information. This speech information can then be used for higher-level language processes, such as word recognition.

The study of speech perception is closely linked to the fields of phonetics and phonology in linguistics and cognitive psychology, and perception in psychology. Research in speech perception seeks to understand how human listeners recognize speech sounds and use this information to understand spoken language. Speech research has applications in building computer systems that can recognize speech, as well as improving speech recognition for hearing- and language-impaired listeners.

Proprioception

Proprioception (from Latin proprius, meaning "one's own" and perception) is the sense of the relative position of neighboring parts of the body. Unlike the six "exteroceptive" senses (sight, taste, smell, touch, hearing, and balance) by which we perceive the outside world, and "interoceptive" senses, by which we perceive the pain and the stretching of internal organs, proprioception is a third distinct sensory modality that provides feedback solely on the status of the body internally. It is the sense that indicates whether the body is moving with required effort, as well as where the various parts of the body are located in relation to each other.

Perception and reality

Many cognitive psychologists hold that, as we move about in the world, we create a model of how the world works. That is, we sense the objective world, but our sensations map to percepts, and these percepts are provisional, in the same sense that scientific hypotheses are provisional (cf. scientific method). As we acquire new information, our percepts shift, thus solidifying the idea that perception is a matter of belief.

Just as one object can give rise to multiple percepts, such as ambiguous images and other visual illusions, so an object may fail to give rise to any percept at all: if the percept has no grounding in a person's experience, the person may literally not perceive it.

This confusing ambiguity of perception is exploited in human technologies such as camouflage, and also in biological mimicry, for example by Peacock butterflies, whose wings bear eye markings that birds respond to as though they were the eyes of a dangerous predator. Perceptual ambiguity is not restricted to vision. For example, recent touch perception research found that kinesthesia-based haptic perception strongly relies on the forces experienced during touch.[12] This makes it possible to produce illusory touch percepts.[13][14]

Cognitive theories of perception assume there is a poverty of stimulus. This (with reference to perception) is the claim that sensations are, by themselves, unable to provide a unique description of the world. Sensations require 'enriching', which is the role of the mental model. A different type of theory is the perceptual ecology approach of James J. Gibson.

Perception-in-action

Gibson rejected the assumption of a poverty of stimulus by rejecting the notion that perception is based in sensations. Instead, he investigated what information is actually presented to the perceptual systems. He and the psychologists who work within this paradigm) detailed how the world could be specified to a mobile, exploring organism via the lawful projection of information about the world into energy arrays. Specification is a 1:1 mapping of some aspect of the world into a perceptual array; given such a mapping, no enrichment is required and perception is direct.

The ecological understanding of perception advanced from Gibson's early work is perception-in-action, the notion that perception is a requisite property of animate action. Without perception, action would not be guided and without action, perception would be pointless. Animate actions require perceiving and moving together. In a sense, "perception and movement are two sides of the same coin, the coin is action." [15] A mathematical theory of perception-in-action has been devised and investigated in many forms of controlled movement by many different species of organism, General Tau Theory. According to this theory, tau information, or time-to-goal information is the fundamental 'percept' in perception.

We gather information about the world and interact with it through our actions. Perceptual information is critical for action. Perceptual deficits may lead to profound deficits in action (for touch-perception-related deficits.[16]

Perceptual abilities and the observer

Perception is often referred to as a "cognitive process" in which information processing is used to transfer information from the world into the brain and mind where it is further processed and related to other information. Some philosophers and psychologists propose that this processing gives rise to particular mental states, whilst others envisage a direct path back into the external world in the form of action.

Many eminent behaviorists such as John B. Watson and B. F. Skinner proposed that perception acts largely as a process between a stimulus and a response, with other brain activities apparently irrelevant to the process. As Skinner wrote:

The objection to inner states is not that they do not exist, but that they are not relevant in a functional analysis.[17]

However, it has been shown by numerous researchers that sensory and perceptual experiences are affected by many factors that are not attributes of the object of perception but rather of the observer. These include the person’s race, gender, and age, among many others. Unlike puppies and kittens, human babies are born with their eyes open and functioning. Neonates begin to absorb and process information from the outside world as soon as they enter it (in some aspects even before). Even before babies are born, their ears are in working order. Fetuses in the uterus can hear sounds and startle at a sudden, loud noise in the mother’s environment. After birth, babies show signs that they remember sounds they heard in the womb. Babies also are born with the ability to tell the direction of a sound. They show this by turning their heads toward the source of a sound. Infants are particularly tuned in to the sounds of human speech. Their senses work fairly well at birth and rapidly improve to near-adult levels. Besides experience and learning, our perceptions can also be influenced by such factors as our motivations, values, interests and expectations, and cultural preconceptions.

Notes

- ↑ Oxford English Dictionary (Oxford University Press, 1971, ISBN 019861117X).

- ↑ K. Moutoussis, K. and S. Zeki, "A Direct Demonstration of Perceptual Asynchrony in Vision," Proceedings of the Royal Society of London, Series B: Biological Sciences, 264 (1997): 393-399.

- ↑ Bradley Steffens, 2006, Ibn al-Haytham: First Scientist, Chapter 5. Morgan Reynolds Publishing. ISBN 1599350246.

- ↑ Omar Khaleefa (Summer 1999). "Who Is the Founder of Psychophysics and Experimental Psychology?," American Journal of Islamic Social Sciences 16(2).

- ↑ Mamassian, Landy & Maloney, 2002.

- ↑ A Primer on Probabilistic Approaches to Visual Perception, Duke University. Retrieved February 10, 2009.

- ↑ Steve Dakin, Computational models of contour integration, UCL.

- ↑ Jay Neitz and Jacobs, Gerald H, 1986, "Polymorphism of the long-wavelength cone in normal human colour vision." Nature. 323, 623-625.

- ↑ Gerald H. Jacobs, 1996, "Primate photopigments and primate color vision." PNAS. 93(2): 577–581.

- ↑ James. J. Gibson, The Senses Considered as Perceptual Systems (Greenwood Press Reprint 1983. ISBN 9780313239618)

- ↑ The Importance of the Sense of Touch in Virtual and Real Environments Gabriel Robles-De-La-Torre 2006 Retrieved February 10, 2009.

- ↑ Force can overcome object geometry in the perception of shape through active touch Robles-De-La-Torre & Hayward 2001. Retrieved February 10, 2009.

- ↑ Haptic Perception of Shape: touch illusions, forces and the geometry of objects Gabriel Robles-De-La-Torre, 2007. Retrieved February 10, 2009.

- ↑ Duncan Graham-Rowe The Cutting Edge of Haptics MIT Technology Review Friday, August 25, 2006. Retrieved February 10, 2009.

- ↑ D.N. Lee.

- ↑ Robles-De-La-Torre, 2006, The Importance of the Sense of Touch in Virtual and Real Environments Retrieved February 10, 2009.

- ↑ B. F. Skinner, Science and Human Behavior (1953 ISBN 0029290406)

ReferencesISBN links support NWE through referral fees

- BonJour L. 2001. "Epistemological Problems of Perception" The Stanford Encyclopedia of Philosophy, Edward Zalta (ed.) Retrieved February 10, 2009.

- Breckon, Toby P. and Robert B. Fisher Amodal volume completion: 3D visual completion Computer Vision and Image Understanding. 99: 499-526. Retrieved February 10, 2009.

- Burge, T. 1993. Vision and intentional content, in E. LePore and R. Van Gulick (eds.) John Searle and his Critics, Oxford: Blackwell. ISBN 9780631187028

- Crane, T. 2005. The Problem of Perception The Stanford Encyclopedia of Philosophy, Edward Zalta (ed.). Retrieved February 10, 2009.

- Conway, B.R. 2001. "Spatial structure of cone inputs to color cells in alert macaque primary visual cortex (V-1)" Journal of Neuroscience. 21(8): 2768-2783. Retrieved February 10, 2009.

- Descartes, Rene. 1641. Meditations on First Philosophy Retrieved February 10, 2009.

- Dretske, Fred. 1999. Knowledge and the Flow of Information, Oxford: Blackwell. ISBN 9781575861951

- Evans, Gareth. 1982. The Varieties of Reference, Oxford: Clarendon Press. ISBN 9780198246862

- Flanagan, J.R., Lederman, S.J. 2001. Neurobiology: Feeling bumps and holes, News and Views, Nature, 412(6845): 389-91. Retrieved February 10, 2009.

- Flynn, Bernard. 2004. "Maurice Merleau-Ponty" The Stanford Encyclopedia of Philosophy, Edward Zalta (ed.). Retrieved February 10, 2009.

- Gibson, James. J. 1983. The Senses Considered as Perceptual Systems. Greenwood Press Reprint. ISBN 9780313239618

- ———. 1987. The Ecological Approach to Visual Perception. Lawrence Erlbaum Associates, ISBN 0898599598

- Gregory, Richard L. 1997. Eye and Brain. Princeton, NJ: Princeton University Press. ISBN 0691048371

- Hayward V, Astley OR, Cruz-Hernandez M, Grant D, Robles-De-La-Torre G. Haptic interfaces and devices. Sensor Review 24(1): 16-29 (2004). Retrieved February 10, 2009.

- Hume, David. 1739-1740. A Treatise of Human Nature: Being An Attempt to Introduce the Experimental Method of Reasoning Into Moral Subjects Retrieved February 10, 2009.

- Kant, Immanuel. 1781. Online text Critique of Pure Reason Retrieved February 10, 2009. Norman Kemp Smith (trans.) with preface by Howard Caygill, Palgrave Macmillan.

- Kandel E, Schwartz J, Jessel T. 2000. Principles of Neural Science. 4th ed. New York, NY: McGraw-Hill. ISBN 0838577016

- Kitayama, S., Duffy, S., Kawamura, T., & Larsen, J. T. 2003. Perceiving an object and its context in different cultures: A cultural look at New Look. Psychological Science, 14, 201-206.

- Lachman, S. J. 1984. Processes in visual misperception: Illusions for highly structured stimulus material. Paper presented at the 92nd annual convention of the American Psychological Association, Toronto, Canada.

- Lachman, S. J. 1996. Processes in Perception: Psychological transformations of highly structured stimulus material. Perceptual and Motor Skills. 83: 411-418.

- Lehar, Steven. Gestalt Isomorphism and the Quantification of Spatial Perception Gestalt Theory 21(2): 122-139. Retrieved February 10, 2009.

- Martin, Paul R. 1998. "Colour processing in the primate retina: recent progress." Journal of Physiology. 513(3): 631-638. Retrieved February 10, 2009.

- McCann, M., (ed.). 1993. Edwin H. Land's Essays. Springfield, VA: Society for Imaging Science and Technology. ISBN 9780892081707

- McClelland, D. C., and Atkinson, J. W. 1948. The projective expression of needs: The effect of different intensities of the hunger drive on perception. Journal of Psychology, 25: 205-222.

- McCreery, Charles. 2006. "Perception and Hallucination: the Case for Continuity." Philosophical Paper No. 2006-1.

- Merleau Ponty, Maurice. 2002. Phenomenology of Perception, Routledge London. ISBN 9780415278416

- McDowell, John. 1982. "Criteria, Defeasibility, and Knowledge," Proceedings of the British Academy. 455–479.

- McDowell, John. 1996. Mind and World, Cambridge, MA: Harvard University Press. ISBN 9780674576100

- McGinn, Colin. 1996. "Consciousness and Space," In Conscious Experience, Thomas Metzinger (ed.). Imprint Academic. ISBN 9780907845102

- Mead, George Herbert. 1938. "Mediate Factors in Perception,". Essay 8 in The Philosophy of the Act, Charles W. Morris with John M. Brewster, Albert M. Dunham and David Miller (eds.), Chicago: University of Chicago. 125-139.

- Moutoussis, K. and Zeki, S. 1997. A direct demonstration of perceptual asynchrony in vision. Proceedings of the Royal Society of London, Series B: Biological Sciences, 264: 393-399.

- Palmer, S. E. 1999. Vision science: Photons to Phenomenology. Cambridge, MA: Bradford Books/MIT Press. ISBN 9780262161831

- Peacocke, Christopher. 1984. Sense and Content, Oxford: Oxford University Press. ISBN 9780198247029

- Peters, G. 2000. "Theories of Three-Dimensional Object Perception - A Survey" Recent Research Developments in Pattern Recognition, Transworld Research Network. Retrieved February 10, 2009.

- Pinker, S. 1997. The Mind’s Eye. In How the Mind Works. 211–233. ISBN 0393318486

- Purves D, Lotto B. 2003. Why We See What We Do: An Empirical Theory of Vision. Sunderland, MA: Sinauer Associates. ISBN 9780878937523

- Putnam, Hilary 2001. The Threefold Cord. New York, NY: Columbia University Press. ISBN 9780231102872

- Rowe, Michael H. "Trichromatic color vision in primates." News in Physiological Sciences. 17(3); 93-98. Retrieved February 10, 2009.

- Russell, Bertrand. 1912. The Problems of Philosophy Retrieved February 10, 2009. London: Williams and Norgate; New York, NY: Henry Holt and Company.

- Shoemaker, Sydney. 1990. "Qualities and Qualia: What's in the Mind?" Philosophy and Phenomenological Research 50, Supplement. 109–131.

- Siegel, Susanna. 2005. "The Contents of Perception" The Stanford Encyclopedia of Philosophy, Edward Zalta (ed.). Retrieved February 10, 2009.

- Skinner, B.F. 1965. Science and Human Behavior. Free Press. ISBN 0029290406

- Steinman, Scott B., Barbara A. Steinman, and Ralph Philip Garzia. 2000. Foundations of Binocular Vision: A Clinical perspective. McGraw-Hill Medical. ISBN 0838526705

- Tong, Frank. 2003. "Primary Visual Cortex and Visual Awareness" Nature Reviews, Neuroscience, Vol 4, 219. Retrieved February 10, 2009.

- Turnbull, C. M. 1961. Observations. American Journal of Psychology, 1: 304-308.

- Tye, Michael. 2002. Consciousness, Color and Content, Cambridge, MA: MIT Press. ISBN 9780262700887

- Verrelli, B. C. and S. Tishkoff. 2004. "Color vision molecular variation." American Journal of Human Genetics. 75(3): 363-375. Retrieved February 10, 2009.

- Wandell, B. 1995. Foundations of Vision Sinauer Press, MA. ISBN 0878938532

External links

All links retrieved November 3, 2025.

- Sound and Music – Dale Purves Lab

- Richard L Gregory

- University of Nottingham Visual Neuroscience

Credits

New World Encyclopedia writers and editors rewrote and completed the Wikipedia article in accordance with New World Encyclopedia standards. This article abides by terms of the Creative Commons CC-by-sa 3.0 License (CC-by-sa), which may be used and disseminated with proper attribution. Credit is due under the terms of this license that can reference both the New World Encyclopedia contributors and the selfless volunteer contributors of the Wikimedia Foundation. To cite this article click here for a list of acceptable citing formats.The history of earlier contributions by wikipedians is accessible to researchers here:

- Perception history

- Amodal_perception history

- Color_vision history

- Visual_perception history

- Depth_perception history

- Philosophy_of_perception history

- Motion_perception history

The history of this article since it was imported to New World Encyclopedia:

Note: Some restrictions may apply to use of individual images which are separately licensed.