Charge-coupled device

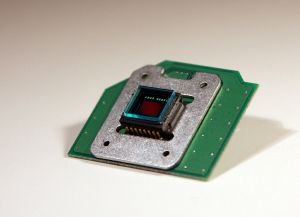

A charge-coupled device (CCD) is a device (described as an "analog shift register") made up of semiconductors that enables the transmission of analog signals (electric charges) through successive stages (capacitors), controlled by a clock signal. "CCD" refers to the way the image signal is read out from the chip. Under the control of an external circuit, each capacitor can transfer its electric charge to one or other of its neighbors. CCDs can be used as a form of memory or for delaying samples of analog signals.

Today, charge-coupled devices are most widely used in arrays of photoelectric light sensors, to serialize parallel analog signals. CCDs are used in digital photography, astronomy (particularly in photometry and "lucky imaging"), sensors, electron microscopy, medical fluoroscopy, and optical and UV spectroscopy. (Not all image sensors use CCD technology; for example, CMOS chips are also commercially available.)

History

In 1961, Eugene F. Lally of the Jet Propulsion Laboratory published a paper titled "Mosaic Guidance for Interplanetary Travel," illustrating a mosaic array of optical detectors that formed a photographic image using digital processing. This paper gave birth to the concept of digital photography. Lally noted such an optical array required development so digital cameras could be produced.

The required array consisting of CCD technology was invented in 1969 by Willard Boyle and George E. Smith at AT&T Bell Labs. The lab was working on the picture phone and the development of semiconductor bubble memory. Merging these two initiatives, Boyle and Smith conceived of the design of what they termed "Charge 'Bubble' Devices." The essence of the design was the ability to transfer charge along the surface of a semiconductor.

As the CCD started its life as a memory device, one could only "inject" charge into the device at an input register. However, it was immediately clear that the CCD could receive charge via the photoelectric effect and electronic images could be created. By 1969, Bell researchers were able to capture images with simple linear devices; thus the CCD was born.

Several companies, including Fairchild Semiconductor, RCA and Texas Instruments, picked up on the invention and began development programs. Fairchild was the first with commercial devices and by 1974 had a linear 500 element device and a 2-D 100 x 100 pixel device. Under the leadership of Kazuo Iwama, Sony also started a big development effort on CCDs involving a lot of money. Eventually, Sony managed to mass produce CCDs for their camcorders. Before this happened, Iwama died in August 1982. Subsequently, a CCD chip was placed on his tombstone to acknowledge his contribution.[1]

In January 2006, Boyle and Smith were awarded the National Academy of Engineering Charles Stark Draper Prize for their work on the CCD.[2]

Basics of operation

In a CCD for capturing images, there is a photoactive region (an epitaxial layer of silicon), and a transmission region made out of a shift register (the CCD, properly speaking).

An image is projected by a lens on the capacitor array (the photoactive region), causing each capacitor to accumulate an electric charge proportional to the light intensity at that location. A one-dimensional array, used in line-scan cameras, captures a single slice of the image, while a two-dimensional array, used in video and still cameras, captures a two-dimensional picture corresponding to the scene projected onto the focal plane of the sensor. Once the array has been exposed to the image, a control circuit causes each capacitor to transfer its contents to its neighbor. The last capacitor in the array dumps its charge into a charge amplifier, which converts the charge into a voltage. By repeating this process, the controlling circuit converts the entire semiconductor contents of the array to a sequence of voltages, which it samples, digitizes and stores in some form of memory.

Detailed physics of operation

The photoactive region of the CCD is, generally, an epitaxial layer of silicon. It has a doping of p+ (Boron) and is grown upon the substrate material, often p++. In buried channel devices, the type of design utilized in most modern CCDs, certain areas of the surface of the silicon are ion implanted with phosphorus, giving them an n-doped designation. This region defines the channel in which the photogenerated charge packets will travel. The gate oxide, that is, the capacitor dielectric, is grown on top of the epitaxial layer and substrate. Later on in the process polysilicon gates are deposited by chemical vapor deposition, patterned with photolithography, and etched in such a way that the separately phased gates lie perpendicular to the channels. The channels are further defined by utilization of the LOCOS process to produce the channel stop region. Channel stops are thermally grown oxides that serve to isolate the charge packets in one column from those in another. These channel stops are produced before the polysilicon gates are, as the LOCOS process utilizes a high temperature step that would destroy the gate material. The channel's stops are parallel to, and exclusive of, the channel, or "charge carrying," regions. Channel stops often have a p+ doped region underlying them, providing a further barrier to the electrons in the charge packets (this discussion of the physics of CCD devices assumes an electron transfer device, though hole transfer is possible).

One should note that the clocking of the gates, alternately high and low, will forward and reverse bias the diode that is provided by the buried channel (n-doped) and the epitaxial layer (p-doped). This will cause the CCD to deplete, near the p-n junction and will collect and move the charge packets beneath the gates—and within the channels—of the device.

It should be noted that CCD manufacturing and operation can be optimized for different uses. The above process describes a frame transfer CCD. While CCDs may be manufactured on a heavily doped p++ wafer it is also possible to manufacture a device inside p-wells that have been placed on an n-wafer. This second method, reportedly, reduces smear, dark current, and infrared and red response. This method of manufacture is used in the construction of interline transfer devices.

Architecture

The CCD image sensors can be implemented in several different architectures. The most common are full-frame, frame-transfer and interline. The distinguishing characteristic of each of these architectures is their approach to the problem of shuttering.

In a full-frame device, all of the image area is active and there is no electronic shutter. A mechanical shutter must be added to this type of sensor or the image will smear as the device is clocked or read out.

With a frame transfer CCD, half of the silicon area is covered by an opaque mask (typically aluminum). The image can be quickly transferred from the image area to the opaque area or storage region with acceptable smear of a few percent. That image can then be read out slowly from the storage region while a new image is integrating or exposing in the active area. Frame-transfer devices typically do not require a mechanical shutter and were a common architecture for early solid-state broadcast cameras. The downside to the frame-transfer architecture is that it requires twice the silicon real estate of an equivalent full-frame device; hence, it costs roughly twice as much.

The interline architecture extends this concept one step further and masks every other column of the image sensor for storage. In this device, only one pixel shift has to occur to transfer from image area to storage area; thus, shutter times can be less than a microsecond and smear is essentially eliminated. The advantage is not free, however, as the imaging area is now covered by opaque strips dropping the fill factor to approximately 50 percent and the effective quantum efficiency by an equivalent amount. Modern designs have addressed this deleterious characteristic by adding microlenses on the surface of the device to direct light away from the opaque regions and on the active area. Microlenses can bring the fill factor back up to 90 percent or more depending on pixel size and the overall system's optical design.

The choice of architecture comes down to one of utility. If the application cannot tolerate an expensive, failure prone, power hungry mechanical shutter, then an interline device is the right choice. Consumer snap-shot cameras have used interline devices. On the other hand, for those applications that require the best possible light collection and issues of money, power and time are less important, the full-frame device will be the right choice. Astronomers tend to prefer full-frame devices. The frame-transfer falls in between and was a common choice before the fill-factor issue of interline devices was addressed. Today, the choice of frame-transfer is usually made when an interline architecture is not available, such as in a back-illuminated device.

CCDs containing grids of pixels are used in digital cameras, optical scanners and video cameras as light-sensing devices. They commonly respond to 70 percent of the incident light (meaning a quantum efficiency of about 70 percent) making them far more efficient than photographic film, which captures only about 2 percent of the incident light.

Most common types of CCDs are sensitive to near-infrared light, which allows infrared photography, night-vision devices, and zero lux (or near zero lux) video-recording/photography. For normal silicon based detectors the sensitivity is limited to 1.1μm. One other consequence of their sensitivity to infrared is that infrared from remote controls will often appear on CCD-based digital cameras or camcorders if they don't have infrared blockers.

Cooling reduces the array's dark current, improving the sensitivity of the CCD to low light intensities, even for ultraviolet and visible wavelengths. Professional observatories will often cool their detectors with liquid nitrogen, to reduce the dark current, and hence the thermal noise, to negligible levels.

CCDs in astronomy

CCDs offer high quantum efficiencies, linearity of output (one count for one photon of light), and ease of use compared to photographic plates. For these and a variety of other reasons, CCDs were rapidly adopted by astronomers for nearly all UV-to-infrared applications.

Thermal noise, dark current, and cosmic rays may alter the pixels in the CCD array. To counter such effects, astronomers take an average of several exposures with the CCD shutter closed and opened. The average of images taken with the shutter closed is necessary to lower the random noise. Once developed, the “dark frame” average image is then subtracted from the open-shutter image to remove the dark current and other systematic defects in the CCD (dead pixels, hot pixels, and so forth). The Hubble Space Telescope, in particular, has a highly developed series of steps (“data reduction pipeline”) used to convert the raw CCD data to useful images. See[3] for a more in-depth description of the steps in processing astronomical CCD data.

CCD cameras used in astrophotography often require sturdy mounts to cope with vibrations and breezes, along with the tremendous weight of most imaging platforms. To take long exposures of galaxies and nebulae, many astronomers use a technique known as auto-guiding. Most autoguiders use a second CCD chip to monitor deviations during imaging. This chip can rapidly detect errors in tracking and command the mount's motors to correct for them.

An interesting unusual astronomical application of CCDs, called "drift-scanning," is to use a CCD to make a fixed telescope behave like a tracking telescope and follow the motion of the sky. The charges in the CCD are transferred and read in a direction parallel to the motion of the sky, and at the same speed. In this way, the telescope can image a larger region of the sky than its normal field of view. The Sloan Digital Sky Survey is the most famous example of this, using the technique to produce the largest uniform survey of the sky yet.

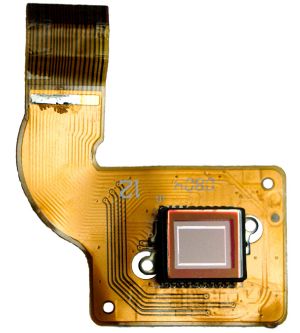

Color cameras

Digital color cameras generally use a Bayer mask over the CCD. Each square of four pixels has one filtered red, one blue, and two green (the human eye is more sensitive to green than either red or blue). The result of this is that luminance information is collected at every pixel, but the color resolution is lower than the luminance resolution.

Better color separation can be reached by three-CCD devices (3CCD) and a dichroic beam splitter prism, that splits the image into red, green, and blue components. Each of the three CCDs is arranged to respond to a particular color. Some semi-professional digital video camcorders (and most professionals) use this technique. Another advantage of 3CCD over a Bayer mask device is higher quantum efficiency (and therefore higher light sensitivity for a given aperture size). This is because in a 3CCD device most of the light entering the aperture is captured by a sensor, while a Bayer mask absorbs a high proportion (about 2/3) of the light falling on each CCD pixel.

Since a very-high-resolution CCD chip is very expensive as of 2005, a 3CCD high-resolution still camera would be beyond the price range even of many professional photographers. There are some high-end still cameras that use a rotating color filter to achieve both color-fidelity and high-resolution. These multi-shot cameras are rare and can only photograph objects that are not moving.

Sensor sizes

Sensors (CCD/CMOS) are often referred to with an imperial fraction designation such as 1/1.8" or 2/3," this measurement actually originates back in the 1950s and the time of Vidicon tubes. Compact digital cameras and Digicams typically have much smaller sensors than a Digital SLR and are thus less sensitive to light and inherently more prone to noise. Some examples of the CCDs found in modern cameras can be found in this table in a Digital Photography Review article

|

|

|

mm |

mm |

mm |

mm2 |

|

|---|---|---|---|---|---|---|

| 1/6" | 4:3 | 2.300 | 1.730 | 2.878 | 3.979 | 1.000 |

| 1/4" | 4:3 | 3.200 | 2.400 | 4.000 | 7.680 | 1.930 |

| 1/3.6" | 4:3 | 4.000 | 3.000 | 5.000 | 12.000 | 3.016 |

| 1/3.2" | 4:3 | 4.536 | 3.416 | 5.678 | 15.495 | 3.894 |

| 1/3" | 4:3 | 4.800 | 3.600 | 6.000 | 17.280 | 4.343 |

| 1/2.7" | 4:3 | 5.270 | 3.960 | 6.592 | 20.869 | 5.245 |

| 1/2" | 4:3 | 6.400 | 4.800 | 8.000 | 30.720 | 7.721 |

| 1/1.8" | 4:3 | 7.176 | 5.319 | 8.932 | 38.169 | 9.593 |

| 2/3" | 4:3 | 8.800 | 6.600 | 11.000 | 58.080 | 14.597 |

| 1" | 4:3 | 12.800 | 9.600 | 16.000 | 122.880 | 30.882 |

| 4/3" | 4:3 | 18.000 | 13.500 | 22.500 | 243.000 | 61.070 |

| Other image sizes as a comparison | ||||||

| APS-C | 3:2 | 25.100 | 16.700 | 30.148 | 419.170 | 105.346 |

| 35mm | 3:2 | 36.000 | 24.000 | 43.267 | 864.000 | 217.140 |

| 645 | 4:3 | 56.000 | 41.500 | 69.701 | 2324.000 | 584.066 |

See also

- Astronomy

- Camcorder

- Camera

- Capacitor

- Digital electronics

- Electron microscope

- Electronics

- Imaging technology

- Semiconductor

- Sensor

- Spectroscopy

Notes

- ↑ B. Johnstone, We Were Burning: Japanese Entrepreneurs and the Forging of the Electronic Age (New York, NY: Basic Books, 1999, ISBN 9780465091171).

- ↑ NAE, Charles Stark Draper Award.

- ↑ O. Hainaut, Basic CCD image processing. Retrieved December 15, 2008.

ReferencesISBN links support NWE through referral fees

- Holst, Gerald C., and Terrence S. Lomheim. 2007. Cmos/Ccd Sensors and Camera Systems. Winter Park, FL: JCD Publishing; Bellingham, WA: SPIE. ISBN 0819467308.

- Janesick, James R. 2001. Scientific Charge-Coupled Devices. Bellingham, WA: SPIE Press. ISBN 0819436984.

- Theuwissen, A.J. 1995. Solid-State Imaging with Charge-Coupled Devices. Dordrecht, NL: Kluwer Academic Publishers. ISBN 0792334566.

External links

All links retrieved December 3, 2023.

- Journal Article On Basics of CCDs.

- Nikon microscopy introduction to CCDs.

- Concepts in Digital Imaging Technology.

- CCD vs. CMOS technical comparison.

Credits

New World Encyclopedia writers and editors rewrote and completed the Wikipedia article in accordance with New World Encyclopedia standards. This article abides by terms of the Creative Commons CC-by-sa 3.0 License (CC-by-sa), which may be used and disseminated with proper attribution. Credit is due under the terms of this license that can reference both the New World Encyclopedia contributors and the selfless volunteer contributors of the Wikimedia Foundation. To cite this article click here for a list of acceptable citing formats.The history of earlier contributions by wikipedians is accessible to researchers here:

The history of this article since it was imported to New World Encyclopedia:

Note: Some restrictions may apply to use of individual images which are separately licensed.