Search engine optimization

Search engine optimization (SEO) is the process of improving the volume and quality of traffic to a web site from search engines via "natural" ("organic" or "algorithmic") search results. Usually, the earlier a site is presented in the search results, or the higher it "ranks," the more searchers will visit that site. SEO can also target different kinds of search, including image search, local search, and industry-specific vertical search engines.

As an Internet marketing strategy, SEO considers how search engines work and what people search for. Optimizing a website primarily involves editing its content and HTML coding to both increase its relevance to specific keywords and to remove barriers to the indexing activities of search engines.

The acronym "SEO" can also refer to "search engine optimizers," a term adopted by an industry of consultants who carry out optimization projects on behalf of clients and by employees who perform SEO services in-house. Search engine optimizers may offer SEO as a stand-alone service or as a part of a broader marketing campaign. Because effective SEO may require changes to the HTML source code of a site, SEO tactics may be incorporated into web site development and design. The term "search engine friendly" may be used to describe web site designs, menus, content management systems and shopping carts that are easy to optimize.

Another class of techniques, known as black hat SEO or Spamdexing, use methods such as link farms and keyword stuffing that degrade both the relevance of search results and the user-experience of search engines. Search engines look for sites that use these techniques in order to remove them from their indices.

History

Webmasters and content providers began optimizing sites for search engines in the mid-1990s, as the first search engines were cataloging the early Web. Initially, all a webmaster needed to do was submit a page, or URL, to the various engines which would send a spider to "crawl" that page, extract links to other pages from it, and return information found on the page to be indexed. The process involves a search engine spider downloading a page and storing it on the search engine's own server, where a second program, known as an indexer, extracts various information about the page, such as the words it contains and where these are located, as well as any weight for specific words, as well as any and all links the page contains, which are then placed into a scheduler for crawling at a later date.

Site owners started to recognize the value of having their sites highly ranked and visible in search engine results, creating an opportunity for both white hat and black hat SEO practitioners. According to industry analyst Danny Sullivan, the earliest known use of the phrase search engine optimization was in 1997.[1]

Early versions of search algorithms relied on webmaster-provided information such as the keyword meta tag, or index files in engines like ALIWEB. Meta tags provided a guide to each page's content. But using meta data to index pages was found to be less than reliable because the webmaster's account of keywords in the meta tag were not truly relevant to the site's actual keywords. Inaccurate, incomplete, and inconsistent data in meta tags caused pages to rank for irrelevant searches. Web content providers also manipulated a number of attributes within the HTML source of a page in an attempt to rank well in search engines.[2]

By relying so much on factors exclusively within a webmaster's control, early search engines suffered from abuse and ranking manipulation. To provide better results to their users, search engines had to adapt to ensure their results pages showed the most relevant search results, rather than unrelated pages stuffed with numerous keywords by unscrupulous webmasters. Since the success and popularity of a search engine is determined by its ability to produce the most relevant results to any given search allowing those results to be false would turn users to find other search sources. Search engines responded by developing more complex ranking algorithms, taking into account additional factors that were more difficult for webmasters to manipulate.

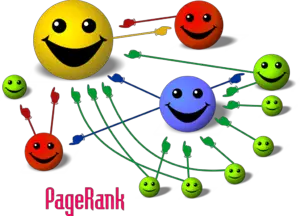

While graduate students at Stanford University, Larry Page and Sergey Brin developed "backrub," a search engine that relied on a mathematical algorithm to rate the prominence of web pages. The number calculated by the algorithm, PageRank, is a function of the quantity and strength of inbound links.[3] PageRank estimates the likelihood that a given page will be reached by a web user who randomly surfs the web, and follows links from one page to another. In effect, this means that some links are stronger than others, as a higher PageRank page is more likely to be reached by the random surfer.

Page and Brin founded Google in 1998. Google attracted a loyal following among the growing number of Internet users, who liked its simple design.[4] Off-page factors (such as PageRank and hyperlink analysis) were considered as well as on-page factors (such as keyword frequency, meta tags, headings, links and site structure) to enable Google to avoid the kind of manipulation seen in search engines that only considered on-page factors for their rankings. Although PageRank was more difficult to game, webmasters had already developed link building tools and schemes to influence the Inktomi search engine, and these methods proved similarly applicable to gaining PageRank. Many sites focused on exchanging, buying, and selling links, often on a massive scale. Some of these schemes, or link farms, involved the creation of thousands of sites for the sole purpose of link spamming.[5] In recent years major search engines have begun to rely more heavily on off-web factors such as the age, sex, location, and search history of people conducting searches in order to further refine results.

By 2007, search engines had incorporated a wide range of undisclosed factors in their ranking algorithms to reduce the impact of link manipulation. Google says it ranks sites using more than 200 different signals.[6] The three leading search engines, Google, Yahoo and Microsoft's Live Search, do not disclose the algorithms they use to rank pages. Notable SEOs, such as Rand Fishkin, Barry Schwartz, Aaron Wall and Jill Whalen, have studied different approaches to search engine optimization, and have published their opinions in online forums and blogs.[7]

Webmasters and search engines

By 1997 search engines recognized that webmasters were making efforts to rank well in their search engines, and that some webmasters were even manipulating their rankings in search results by stuffing pages with excessive or irrelevant keywords. Early search engines, such as Infoseek, adjusted their algorithms in an effort to prevent webmasters from manipulating rankings.[8]

Due to the high marketing value of targeted search results, there is potential for an adversarial relationship between search engines and SEOs. In 2005, an annual conference, AIRWeb, Adversarial Information Retrieval on the Web,[9] was created to discuss and minimize the damaging effects of aggressive web content providers.

SEO companies that employ overly aggressive techniques can get their client websites banned from the search results. In 2005, the Wall Street Journal reported on a company, Traffic Power, which allegedly used high-risk techniques and failed to disclose those risks to its clients.[10] Google's Matt Cutts later confirmed that Google did in fact ban Traffic Power and some of its clients.[11]

Some search engines have also reached out to the SEO industry, and are frequent sponsors and guests at SEO conferences, chats, and seminars. In fact, with the advent of paid inclusion, some search engines now have a vested interest in the health of the optimization community. Major search engines provide information and guidelines to help with site optimization.[12][13]

Getting indexed

The leading search engines, Google, Yahoo! and Microsoft, use crawlers to find pages for their algorithmic search results. Pages that are linked from other search engine indexed pages do not need to be submitted because they are found automatically.

Two major directories, the Yahoo Directory and the Open Directory Project both require manual submission and human editorial review.[14] Google offers Google Webmaster Tools, for which an XML Sitemap feed can be created and submitted for free to ensure that all pages are found, especially pages that aren't discoverable by automatically following links.[15]

Search engine crawlers may look at a number of different factors when crawling a site. Not every page is indexed by the search engines. Distance of pages from the root directory of a site may also be a factor in whether or not pages get crawled.[16]

Preventing indexing

To avoid undesirable content in the search indexes, webmasters can instruct spiders not to crawl certain files or directories through the standard robots.txt file in the root directory of the domain. Additionally, a page can be explicitly excluded from a search engine's database by using a meta tag specific to robots. When a search engine visits a site, the robots.txt located in the root directory is the first file crawled. The robots.txt file is then parsed, and will instruct the robot as to which pages are not to be crawled. As a search engine crawler may keep a cached copy of this file, it may on occasion crawl pages a webmaster does not wish crawled. Pages typically prevented from being crawled include login specific pages such as shopping carts and user-specific content such as search results from internal searches. In March 2007, Google warned webmasters that they should prevent indexing of internal search results because those pages are considered search spam.[17]

White hat versus black hat

SEO techniques can be classified into two broad categories: techniques that search engines recommend as part of good design, and those techniques of which search engines do not approve. The search engines attempt to minimize the effect of the latter, among them spamdexing. Industry commentators have classified these methods, and the practitioners who employ them, as either white hat SEO, or black hat SEO. White hats tend to produce results that last a long time, whereas black hats anticipate that their sites may eventually be banned either temporarily or permanently once the search engines discover what they are doing.[18]

An SEO technique is considered white hat if it conforms to the search engines' guidelines and involves no deception. As the search engine guidelines[19][12][13] are not written as a series of rules or commandments, this is an important distinction to note. White hat SEO is not just about following guidelines, but is about ensuring that the content a search engine indexes and subsequently ranks is the same content a user will see. White hat advice is generally summed up as creating content for users, not for search engines, and then making that content easily accessible to the spiders, rather than attempting to trick the algorithm from its intended purpose. White hat SEO is in many ways similar to web development that promotes accessibility,[20] although the two are not identical.

Black hat SEO attempts to improve rankings in ways that are disapproved of by the search engines, or involve deception. One black hat technique uses text that is hidden, either as text colored similar to the background, in an invisible div, or positioned off screen. Another method gives a different page depending on whether the page is being requested by a human visitor or a search engine, a technique known as cloaking.

Search engines may penalize sites they discover using black hat methods, either by reducing their rankings or eliminating their listings from their databases altogether. Such penalties can be applied either automatically by the search engines' algorithms, or by a manual site review. One infamous example was the February 2006 Google removal of both BMW Germany and Ricoh Germany for use of deceptive practices.[21] Both companies, however, quickly apologized, fixed the offending pages, and were restored to Google's list.[22]

As a marketing strategy

Placement at or near the top of the rankings increases the number of searchers who will visit a site. However, more search engine referrals does not guarantee more sales. SEO is not necessarily an appropriate strategy for every website, and other Internet marketing strategies can be much more effective, depending on the site operator's goals. A successful Internet marketing campaign may drive organic traffic to web pages, but it also may involve the use of paid advertising on search engines and other pages, building high quality web pages to engage and persuade, addressing technical issues that may keep search engines from crawling and indexing those sites, setting up analytics programs to enable site owners to measure their successes, and improving a site's conversion rate.[23]

SEO may generate a return on investment. However, search engines are not paid for organic search traffic, their algorithms change, and there are no guarantees of continued referrals. Due to this lack of guarantees and certainty, a business that relies heavily on search engine traffic can suffer major losses if the search engines stop sending visitors.[24] It is considered wise business practice for website operators to liberate themselves from dependence on search engine traffic.[25] A top-ranked SEO blog reported, "Search marketers, in a twist of irony, receive a very small share of their traffic from search engines."[26] Instead, their main sources of traffic are links from other websites.

International Markets

The search engines' market shares vary from market to market, as does competition. In 2003, Danny Sullivan stated that Google represented about 75 percent of all searches.[27] In markets outside the United States, Google's share is often larger, as much as 90 percent.[28]

Successful search optimization for international markets may require professional translation of web pages, registration of a domain name with a top level domain in the target market, and web hosting that provides a local IP address. Otherwise, the fundamental elements of search optimization are essentially the same, regardless of language.

Legal precedents

On October 17, 2002, SearchKing filed suit in the United States District Court, Western District of Oklahoma, against the search engine Google. SearchKing's claim was that Google's tactics to prevent spamdexing constituted a tortious interference with contractual relations. On January 13, 2003, the court granted Google's motion to dismiss the complaint because Google's Page Ranks are entitled to First Amendment protection and further that SearchKing "failed to show that Google's actions caused it irreparable injury, as the damages arising from its reduced ranking were too speculative."[29]

In March 2006, KinderStart filed a lawsuit against Google over search engine rankings. Kinderstart's web site was removed from Google's index prior to the lawsuit and the amount of traffic to the site dropped by 70 percent. On March 16, 2007 the United States District Court for the Northern District of California (San Jose Division) dismissed KinderStart's complaint without leave to amend, and partially granted Google's motion for Rule 11 sanctions against KinderStart's attorney, requiring him to pay part of Google's legal expenses.[30]

Notes

- ↑ Danny Sullivan, Who Invented the Term "Search Engine Optimization"?. Search Engine Watch, June 14, 2004. See Google groups thread. Retrieved March 27, 2020.

- ↑ Glen Pringle, Lloyd Allison, and David L. Dowe, What is a tall poppy among web pages?. Proc. 7th Int. World Wide Web Conference, Brisbane, April 1998. Retrieved March 27, 2020.

- ↑ Sergey Brin and Larry Page, The Anatomy of a Large-Scale Hypertextual Web Search Engine Proceedings of the seventh international conference on World Wide Web, 1998. Retrieved March 27, 2020.

- ↑ Bill Thompson, Is Google good for you? BBC News, December 19, 2003. Retrieved March 27, 2020.

- ↑ Zoltan Gyongyi and Hector Garcia-Molina, Link Spam Alliances. Proceedings of the 31st VLDB Conference, Trondheim, Norway. 2005. Retrieved March 27, 2020.

- ↑ Saul Hansell, Google Keeps Tweaking Its Search Engine. The New York Times, June 3, 2007. Retrieved March 27, 2020.

- ↑ Search Engine Ranking Factors 2015 Moz. Retrieved March 27, 2020.

- ↑ Laurie J. Flynn, Desperately Seeking Surfers. The New York Times, November 11, 1996. Retrieved March 27, 2020.

- ↑ AIRWeb. Adversarial Information Retrieval on the Web. Retrieved March 27, 2020.

- ↑ David Kesmodel, Sites Get Dropped by Search Engines After Trying to 'Optimize' Rankings. Wall Street Journal, September 22, 2005. Retrieved March 27, 2020.

- ↑ Matt Cutts, Confirming a penalty. Gadgets, Google, and Seo, February 2, 2006. Retrieved March 27, 2020.

- ↑ 12.0 12.1 Google's Webmaster Guidelines. google.com. Retrieved March 27, 2020.

- ↑ 13.0 13.1 Yahoo! Content Quality Guidelines. help.yahoo.com. Retrieved March 27, 2020.

- ↑ Submitting To Directories: Yahoo & The Open Directory. Search Engine Watch, March 12, 2007. Retrieved March 27, 2020.

- ↑ Learn about sitemaps. google.com. Retrieved March 27, 2020.

- ↑ J. Cho, H. Garcia-Molina, and L. Page, Efficient crawling through URL ordering. Proceedings of the seventh conference on World Wide Web, Brisbane, Australia, April 14-18, 1998. Retrieved March 27, 2020.

- ↑ Danny Sullivan, Newspapers Amok! New York Times Spamming Google? LA Times Hijacking Cars.com? Search Engine Land, May 8, 2007. Retrieved March 27, 2020.

- ↑ Jill Whalen, Black Hat/White Hat Search Engine Optimization. Search Engine Guide, November 16, 2004.

- ↑ Do you need an SEO?. google.com. Retrieved March 27, 2020.

- ↑ Andy Hagans, High Accessibility Is Effective Search Engine Optimization. A List Apart, November 8, 2005. Retrieved March 27, 2020.

- ↑ Matt Cutts, Ramping up on international webspam. Gadgets, Google, and SEO, February 4, 2006. Retrieved March 27, 2020.

- ↑ Matt Cutts, Recent reinclusions. Gadgets, Google, and SEO, February 7, 2006. Retrieved March 27, 2020.

- ↑ Melissa Burdon, The Battle Between Search Engine Optimization and Conversion: Who Wins?. H2 Desk, March 31, 2007 . Retrieved March 27, 2020.

- ↑ Andy Greenberg, Condemned To Google Hell. Forbes, April 30, 2007. Retrieved March 27, 2020.

- ↑ Jakob Nielsen, Search Engines as Leeches on the Web. Nielsen Norman Group, January 9, 2006. Retrieved March 27, 2020.

- ↑ SEOmoz: Best SEO Blog of 2006. Search Engine Journal, January 3, 2007. Retrieved March 27, 2020.

- ↑ Jefferson Graham, The search engine that could. USA Today, August 26, 2003. Retrieved March 27, 2020.

- ↑ Search Engine Market Share Worldwide Stat Counter, Global Stats. Retrieved March 27, 2020.

- ↑ Martin Samson, Search King, Inc. v. Google Technology, Inc. Internet Library of Law and Court Decisions. Retrieved March 27, 2020.

- ↑ Eric Goldman, KinderStart v. Google Dismissed–With Sanctions Against KinderStart’s Counsel Technology & Marketing Law Blog, March 20, 2007. Retrieved March 27, 2020.

ReferencesISBN links support NWE through referral fees

- Grappone, Jennifer, and Gradiva Couzin. Search Engine Optimization: An Hour a Day. San Francisco, CA: Sybex, 2006. ISBN 978-0471787532

- Konia, Brad S. Search Engine Optimization with WebPosition Gold 2. Wordware web programming/development library. Plano, TX: Wordware Pub, 2002. ISBN 978-0585428475

- Ledford, Jerri L. SEO: Search Engine Optimization Bible. Hoboken, NJ: Wiley, 2008. ISBN 978-0470175002

- Pfanner, Eric. New to Russia, Google Struggles to Find Its Footing The New York Times, December 18, 2006. Retrieved March 27, 2020.

- Potts, Kevin. Web Design and Marketing Solutions for Business Websites. Berkeley, CA: Friends of Ed, 2007. ISBN 978-1590598399

- Siskind, Gregory H., Deborah McMurray, and Richard P. Klau. The Lawyer's Guide to Marketing on the Internet. Chicago, IL: American Bar Association, 2007. ISBN 978-1590318768

External links

All links retrieved January 25, 2023.

- Google Webmaster Guidelines

- Yahoo! Webmaster Guidelines

- 72 Stats To Understand SEO In 2018 (Infographic)

- 36 Vital SEO Statistics and Facts to Keep in Mind for 2020

Credits

New World Encyclopedia writers and editors rewrote and completed the Wikipedia article in accordance with New World Encyclopedia standards. This article abides by terms of the Creative Commons CC-by-sa 3.0 License (CC-by-sa), which may be used and disseminated with proper attribution. Credit is due under the terms of this license that can reference both the New World Encyclopedia contributors and the selfless volunteer contributors of the Wikimedia Foundation. To cite this article click here for a list of acceptable citing formats.The history of earlier contributions by wikipedians is accessible to researchers here:

The history of this article since it was imported to New World Encyclopedia:

Note: Some restrictions may apply to use of individual images which are separately licensed.